Week 260

Echocardiogram Foundation Model, AI-Assisted Screening, Diabetes

In Week #260 of the Doctor Penguin newsletter, the following papers caught our attention:

1. Echocardiogram Foundation Model. Echocardiography is the most common cardiac imaging modality, using ultrasound to visualize heart structure and function. A comprehensive echocardiography study typically captures 50-150 videos from different anatomical views, each optimized for visualizing specific cardiac structures. Previous AI models for echocardiography have been limited to single-view, single-task approaches that cannot synthesize complementary information from the multiple views captured during a full exam.

Vukadinovic et al. developed EchoPrime, a multi-view, video foundation model for comprehensive echocardiogram interpretation trained on over 12 million video-report pairs. EchoPrime consists of four key components: a video encoder, text encoder, view classifier, and anatomical attention module. The video and text encoders were trained contrastively on echocardiogram video clips and corresponding cardiologist reports to learn a joint video-text representation space. The view classifier was trained to classify different types of ultrasound videos into 58 standard echocardiographic views. For each section of the echo report (Left Ventricle, Mitral Valve, Aorta, etc.), the anatomical attention module uses multiple instance learning to assign importance weights to each view based on the anatomical structure being evaluated. For example, the model learned to identify and focus on subcostal views where the IVC (inferior vena cava, a large vein) is clearly visible for prediction tasks involving the IVC. During inference, EchoPrime retrieves the 50 most similar historical reports using anatomy-weighted embeddings and aggregates their findings to assemble final reports. When tested across five international healthcare systems, EchoPrime matched or exceeded the performance of task-specific models while demonstrating interpretability through learned view weightings that align closely with how expert cardiologists prioritize different imaging views for each anatomical structure. Notably, the model can identify cardiac diseases not typically diagnosed by echocardiography alone: without supervised fine-tuning, using EchoPrime’s video embeddings with simple k-nearest neighbor probing enables detection of cardiac amyloidosis (AUC 0.96) and ST-elevation myocardial infarction (AUC 0.92), suggesting the model has learned clinically meaningful representations of cardiac pathophysiology.

Read paper | Nature

2. AI-Assisted Screening. Transthyretin amyloid cardiomyopathy (ATTR-CM) is an increasingly recognized cause of heart failure, but its nonspecific early symptoms often lead to delayed diagnosis and a historically poor prognosis, with a median survival of only 3.5 years without treatment. Early diagnosis has become crucial for improving patient outcomes, as expanding treatment options are becoming available to stabilize disease progression.

Jain et al. conducted an AI-augmented screening program that successfully identified previously undiagnosed cases of ATTR-CM. They created ATTRACTnet, a convolutional neural network that predicts ATTR-CM risk using ECG waveforms, echocardiographic measurements, and clinical data. In a prospective trial across multiple hospital sites, the system achieved a 48% diagnostic rate among tested patients, which was 2.8 times higher than historical controls, with 88% of diagnosed patients initiating treatment within 3 months. This AI-assisted approach resulted in an 18% relative increase in new ATTR-CM diagnoses systemwide compared to the previous year. Retrospective analysis revealed that the algorithm could have identified these patients a median of 345 days earlier if it had been applied to their prior ECGs and echocardiograms. The program successfully detected cases in historically underdiagnosed populations: Hispanic and non-Hispanic Black patients represented 50% of trial diagnoses compared to 40.4% in historical controls, while the model maintained similar diagnostic accuracy across all racial and ethnic groups. These findings suggest that AI-assisted screening could meaningfully close critical diagnostic gaps and reduce treatment delays for ATTR-CM.

Read Paper | JAMA Cardiology

3. AI-Assisted Screening. In the US, mammograms are typically reviewed by only one radiologist. Such a single-reading paradigm may miss cancers, especially in women with dense breast tissue where tumors can hide in the imaging, and contributes to disparities where Black women experience 40% higher breast cancer mortality despite lower incidence rates. Would using AI to flag high-risk cases for specialist review catch more cancers while reducing inequities?

Louis et al. conducted a large-scale real-world study to evaluate an AI-driven breast cancer screening workflow across over half a million mammograms from diverse populations in four US states. The multistage workflow combined an AI detection tool with a “SafeGuard Review” system, where high-risk cases flagged by AI but initially read as normal received additional review by breast imaging specialists. Comparing the AI workflow (208,891 exams) to standard care (370,692 exams), the study found a 21.6% increase in cancer detection rate with only a 5.7% increase in the rate of patients called back for additional workup. These benefits were consistent across all racial groups and breast density categories, with cancer detection increasing by 20-23% in each subgroup without creating disparities. Overall, this AI-assisted approach required additional expert review for only 8% of cases, yet substantially improved early cancer detection in the context of US breast cancer screening, potentially identifying an additional 34,000 cancers annually across US screening programs while maintaining equitable outcomes for historically underserved populations.

Read Paper | Nature Health

4. Diabetes. Despite multiple factors influencing glucose homeostasis, diabetes diagnosis and monitoring still depend primarily on a single episodic measurement of HbA1c.

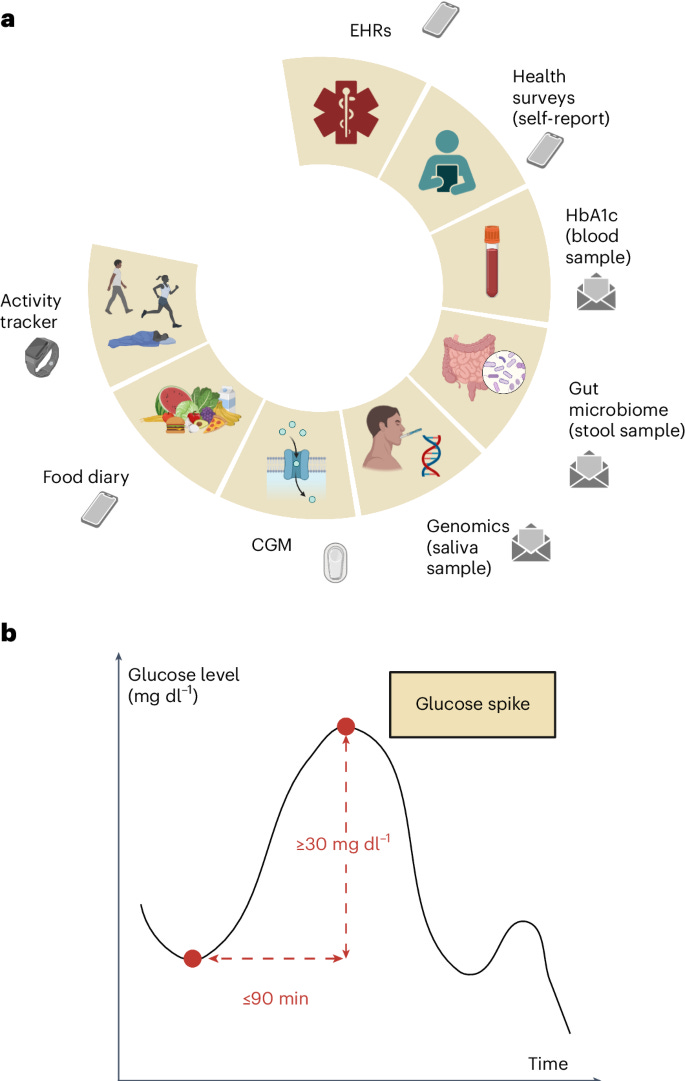

Carletti et al. conducted a prospective study that analyzed multimodal data from 347 deeply phenotyped individuals across the diabetes spectrum (normoglycemic, prediabetic, and type 2 diabetic) to understand glucose spike patterns and their correlates. Participants wore continuous glucose monitors and fitness trackers for 10 days while also providing blood, saliva, and stool samples for HbA1c, genomic, and gut microbiome analysis. The study found significant differences in glucose dynamics between diabetes states, with type 2 diabetes patients showing longer spike resolution times and higher nocturnal hypoglycemia. It also discovered strong correlations between gut microbiome diversity and healthier glucose metrics, as well as between resting heart rate and spike resolution time. The study developed a multimodal AI risk assessment model that revealed substantial variability among individuals with identical HbA1c values, demonstrating that people with the same HbA1c can have vastly different glucose regulation patterns. These results suggest that traditional HbA1c measurements alone may mask critical differences in metabolic health, and that a more comprehensive approach incorporating continuous glucose monitoring, wearable device data, microbiome data, lifestyle factors, and other biomarkers could improve diabetes prevention, diagnosis, and treatment strategies.

Read Paper | Nature Medicine

-- Emma Chen, Pranav Rajpurkar & Eric Topol